|

| Source: @CaitlinHafferty |

This blog post is an overview and tutorial for using automated transcription app (Otter.ai) for qualitative research. I highlight some key features, provide examples, and briefly discuss some key considerations. This is the second post in a 2-part introduction to using speech-to-text apps (see part 1 for background information and an overview of what's available in 2020). You can also check out this third post which covers some ethical, privacy/security, and safe storage considerations for qualitative research.

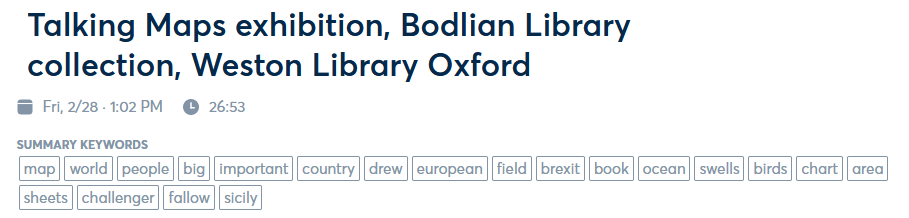

I've used Otter.ai for a while in my PhD research to record, transcribe, edit, and summarise qualitative interviews. For the example in this post, I've used an excerpt from a recording taken on my smartphone at the Talking Maps exhibition at the Weston Library in Oxford (fascinating exhibition!). This

recording was taken (with permission) during a guided tour with Stewart

Ackland from the Map Department at the Bodleian Library - it captures

the voices of several people (we were in a big group) as we move around the exhibition, talking about different historic and contemporary maps. I've used this recording as it gives a good indication of how Otter.ai can be used in a mobile and group setting, also demonstrating its ability to integrate photos to provide more information/context to the conversations.

Having a conversation automatically transcribed in real-time is a huge advantage (NB. you can also feed-in pre-recorded audio into the application, which then generates a transcript). I found the following features particularly useful: 1) the generation of automatic word frequencies and word clouds; 2) ability to explore the transcript and highlight key words; 3) ease of editing the transcript. I'll discuss each of these below alongside some limits/considerations.

Word frequencies and word clouds

|

| Source: @CaitlinHafferty |

Otter.ai

automatically finds key words (i.e. the most frequently mentioned

words) in the transcript. It displays these as a list under the title once

it has finished processing the conversation after recording. These

words are ordered in terms of the frequency that they are mentioned, and

you can click on any of these words to highlight it throughout the

transcript. Otter can also generate a word cloud from these frequent

words, with the size of the word proportional to its frequency.

|

| Source: @CaitlinHafferty |

Word

clouds are by no means

a sophisticated way to analyse text, however they do provide a quick,

easy, and

engaging way to see which words are most prevalent in your transcript.

For example, the photos at the beginning of this blog (parts 1 and 2) are word clouds created from the text in the post - including words I've frequently used like 'transcription', 'otter.ai' and 'example'. In the word cloud above, you can see that our conversation at the map exhibition was (unsurprisingly!) about maps, and things that can be related to maps (country, area, land, ocean, world, Europe, people, etc.). It’s important to note that the transcript has been automatically cleaned so that common English words (e.g. “so”, “if”, “and”) have been removed for you (so they don’t affect the frequency of the words you might be most interested in).

Exploring the transcript

In the previous example, you can see that ‘field’ was one of the key words in this conversation about maps. If you want to quickly find out more about this word, you can do this by clicking on the key word and it will highlight all those words in the transcript (like doing CTRL + f in a document). Let’s have a look at where ‘field’ is mentioned in the Talking Maps transcript.

|

| Source: @CaitlinHafferty |

When we navigate to the mentions of ‘field’ in the transcript, we can see that this is clustered around 17 minutes in – the exhibition guide is talking about a an interesting map from the 1600s, which depicts common agricultural practices at the time. You can also see that it has highlighted the position of the word ‘field’ throughout your transcript along the time bar at the bottom, which makes it easy to skip to the word you are interested in.

You can also highlight, comment, and share excerpts from the transcript really easily within the application. To do this you double-click on the text to bring up a list of option icons (alternatively, you could export the transcript to a Microsoft Word document and highlight/annotate).

Editing

As you might be able to tell from the 'field' excerpt above, one downside to Otter.ai is that it transcribes almost everything that is being said. Naturally, humans tend to not always speak in coherent and flowing sentences and can change the direction of what they are saying mid-sentence (and pause, ‘umm’ and ‘err’ a lot). Therefore, you can end up with a lot of repetition, breaks in sentences, large chunks of monologue, and some sentences that don’t make sense.

Otter.ai can also sometimes have issues understanding different accents, speeds and tones of conversation (however this is improving constantly). Other issues can include when multiple people speak at once, when there is considerable background noise, or technical issues like loss of internet signal, speaker quality, or battery life.

Fortunately, editing your transcript in Otter.ai is really easy! The transcript can be automatically time stamped and broken up into chunks, so you can easily skip through the recording and edit any obvious errors. Edits can be done either within the application (easiest, as you can double-click to edit text within the application and play audio at the same time) or exported to a seperate document (e.g. Microsoft Word). When you edit your transcript within the application, Otter.ai automatically realigns your text with the audio which is useful.

Other useful editing features include saving 'personal vocabulary' such as names, places, and acronyms to increase the accuracy of transcription (you can 'teach' Otter.ai up to 5 words for free, or 200 if you upgrade).

Integrating photos

The examples below also show how you can easily integrate photos within the text either during or after recording. This is quite useful for providing a visual representation of what the speaker is referring to in the conversation (in the example below, unsurprisingly, it’s maps again!). It's also a nice feature for researchers interested in mobile research methods (particularly those involving walking interviews, smartphones, and/or human-technology interactions), however background noise and the recording of multiple participants might be an issue here.

|

| Source: @CaitlinHafferty |

You might have noticed that in the pictures above, the person who is speaking is labelled as ‘Speaker 1’. At first, the speaker’s name was blank. Once I had labelled this, the computer will begin to scan through and automatically label ‘Speaker 1’ whenever it picks up that they are saying something. This is mostly accurate (ish), but you might want to double check by listening back through your recording. You can also save the names (or code names for) ‘suggested speakers’ in the Otter app. I’ve found this useful when recording regularly occurring meetings, for example those with my PhD supervisors.

Is there anything I should consider before using it?

Otter.ai is not 100%

accurate and it might not be the best, most reliable (and most time or

cost-effective) choice for everyone. As I've discussed in this post, various factors can increase the likelihood of inaccuracies in the transcript which will require further edits.

However, I've found that the time taken to listen through and make small edits is considerably less than typing the whole transcript out by hand. In addition, listening again helps gain 'familiarity' with what you have recorded, allowing you to annotate and highlight the text. It's also worth ensuring that you have reliable internet connection and checking where the microphones are on your device (e.g. most smartphones have mics at the top and bottom of the handset).

Importantly, the use of speech-to-text applications (including Otter.ai) for research purposes comes with important concerns regarding privacy and security. This is because sections of your recorded information could be used for training and quality testing purposes - see Otter.ai FAQs and view their full privacy policy here. It's good practice to carefully consider the privacy and security of any application or service you use for transcription, particularly if you're responsible for handling sensitive data. It is also important to think about how using apps like Otter.ai fit in with your institution’s GDPR and ethics guidelines, and/or the guidelines of the organisation you are collecting data for. For example, you may be required to gain informed consent from anyone you wish to record using Otter.ai (or similar apps), and/or gain ethical clearance from your institution to use a third-party method of recording and data storage. I wrote a blog post about these issues (here), which includes example text for privacy notices and ethical approval applications.

As with any digital research

tool, you might want to think about the ways that technology impacts different people. Technology and ethics is an important consideration here, including how this affects the

knowledge produced by the research encounter. If you’re broadly interested in digital

research methods and ethics, literature on 'online research methods and social research' is a good place to start. Considering the explosion

of the use of digital tools during the coronavirus pandemic and social

distancing measures, this LSE Impact Blog post outlines some practical and ethical

considerations of carrying out qualitative research under lockdown (this Google Docs on ‘doing fieldwork in a pandemic’,

edited by Deborah Lupton, also contains some excellent resources).

Conclusion: are automated speech-to-text apps useful for qualitative research?

Automated speech-to-text applications are incredibly useful, if used with consideration and within an appropriate context. Apps like Otter.ai can save you a large amount of time by allowing a computer to perform the labour-intensive task of transcription for you. They can also help by identifying emerging themes, highlighting key words, embedding photographs, and visualising your text (such as word clouds).

Speech-to-text apps are not 100% there yet in terms of the accuracy and reliability of transcription (thus require a certain level of manual editing after the transcript has been generated). Despite this, some manual editing isn’t necessarily a bad thing as listening through recordings again can help gain a better understanding of the data you have collected. As with many digital methods, these apps may also provoke concerns regarding ethics, privacy/security and GDPR, data processing, and safe storage.

Artificial intelligence and machine learning in speech recognition is developing rapidly and offers an exciting future. Apps like Otter.ai will only continue to improve with new features and increased accuracy, which vast potential to optimise research strategies and working conditions. Of course, different transcription apps and methods will work differently for different projects/contexts - try them out for yourself to see what works best!

Links to some useful resources:

- Ethical, privacy, and security considerations for using automated transcription software - https://caitlinhafferty.blogspot.com/2020/10/ethics-of-auto-transcription.html

- Tech for Good blog post - https://openheroines.org/technology-can-be-used-to-increase-social-injustice-where-do-you-stand-8dbba4fa8526

- Disrupting transcription - How automation is transforming a foundational research method, by Daniela Duca. Link: https://blogs.lse.ac.uk/impactofsocialsciences/2019/09/17/disrupting-transcription-how-technology-is-transforming-a-foundational-research-method/

- Online research methods and social theory - https://www.researchgate.net/publication/237837345_Online_Research_Methods_and_Social_Theory

- Carrying out qualitative research under lockdown - Practical and ethical considerations, by Adam Jowett. Link: https://blogs.lse.ac.uk/impactofsocialsciences/2020/04/20/carrying-out-qualitative-research-under-lockdown-practical-and-ethical-considerations/

- Doing Fieldwork in a Pandemic, Google Docs (crowed sourced document edited by Deborah Lupton) - https://docs.google.com/document/d/1clGjGABB2h2qbduTgfqribHmog9B6P0NvMgVuiHZCl8/edit#heading=h.48pen01tiqcb

- Top 5 free transcription software in 2020. Link: https://www.saasworthy.com/blog/top-free-transcription-software-in-2019/

- Best transcription services in 2020: transcribe audio and video into text. Link: https://www.techradar.com/uk/best/best-transcription-services

- Best speech to text software in 2020: Free, paid, and online voice recognition apps and services. Link: https://www.techradar.com/uk/news/best-speech-to-text-app

- Your Guide to Natural Language Processing (NLP). Link: https://towardsdatascience.com/your-guide-to-natural-language-processing-nlp-48ea2511f6e1

- A Beginners Guide to Natural Language Processing. Link: https://towardsdatascience.com/a-beginners-guide-to-natural-language-processing-e21e3e016f84

- Otter.ai - https://otter.ai/

- Live interactive transcripts for Zoom. Link: https://otter.ai/zoom

- CEO Tech Talk: How Otter.ai Uses Artificial Intelligence to Automatically Transcribe Speech to Text. Link: https://www.forbes.com/sites/jeanbaptiste/2019/06/19/ceo-tech-talk-how-otter-ai-uses-artificial-intelligence-to-automatically-transcribe-speech-to-text/#393971be3872

- RGS-IBG Digital Geographies Research Group - https://digitalgeographiesrg.org/

- Otter.ai FAQs - https://blog.otter.ai/help-center/?ver=in_app

- Otter.ai Privacy Policy - https://otter.ai/terms